Introduction

As developers and CTOs, we're always on the lookout for innovative ways to integrate AI into our business processes. Recently, I took on an exciting challenge: developing an agent-based sales outreach tool that sent over a thousand personalized emails for a side project.

This technical blog post aims to share the insights gained from this experiment, providing both a comprehensive overview of the system's architecture and a solid foundation for developers looking to build their own AI agents.

By diving into the technical details, I hope to inspire fellow developers to explore the potential of AI agents in their own work. Whether you're looking to enhance your outreach efforts or simply curious about practical AI applications, this guide offers valuable insights.

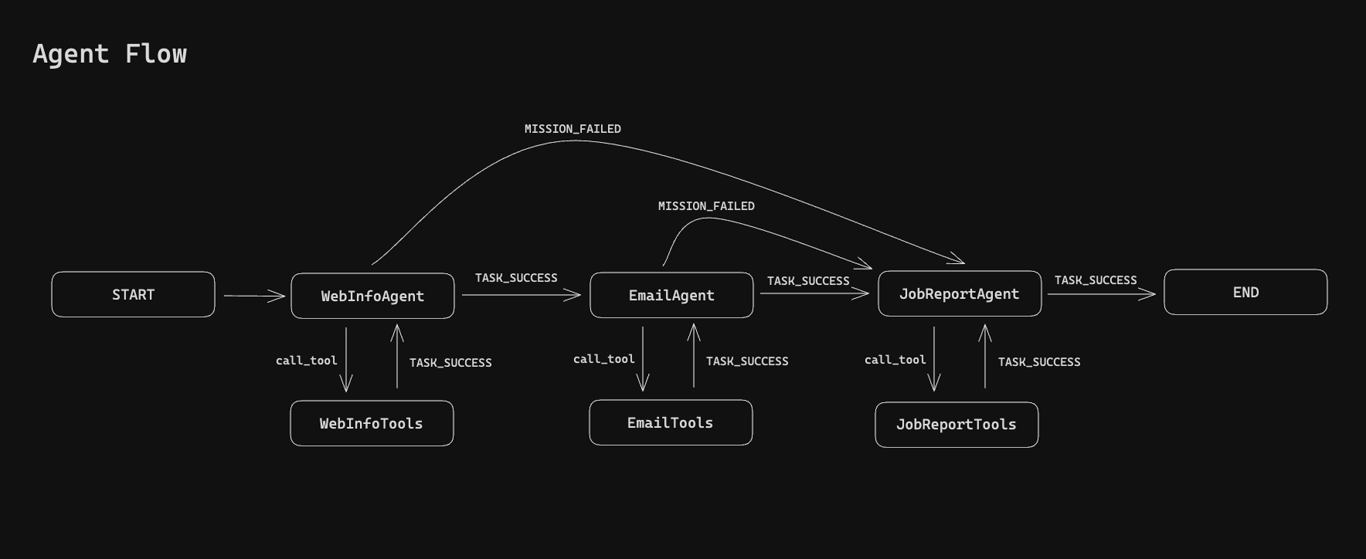

High-Level Architecture Overview

The core of this project is built on LangGraph, an innovative agent workflow framework developed by LangChain. LangGraph uses a graph structure to define workflows, enabling various agent architectures including supervisor-based, hierarchical, and multi-agent collaborations.

Our implementation features a multi-agent workflow with three main types of agents:

WebInfoAgent: Gathers information about the subject to understand the context of the outreach.

EmailAgent: Crafts personalized outreach emails based on the research report.

JobReportAgent: Handles reporting the success status of the task.

This workflow is designed for seamless handoffs between agents. Importantly, any agent can mark the mission as failed at any point, triggering the JobReportAgent to report the failure.

Key Components

Task Scheduling and Job Creation: Scripts that scan platforms like Hacker News and GitHub to generate potential outreach targets.

Data Enrichment: A process that gathers additional information about the targets using APIs and web scraping techniques.

Job Database: Stores enriched data ready for AI agents to process.

LangGraph Implementation: Defines the agent workflow and interactions.

LangSmith: Used for observability and monitoring of agent interactions.

Here's a basic example of how a LangGraph workflow might be structured:

// in `src/agentWorkflow/githubBlogWorkflowLogic.ts:154`

const workflow = new StateGraph({

channels: agentStateChannels,

})

.addNode('WebInfoAgent', webInfoAgentNode)

.addNode('WebInfoTools', webInfoToolNode)

.addNode('EmailAgent', emailAgentNode)

.addNode('EmailTools', emailToolNode)

.addNode('JobReportAgent', jobReportAgentNode)

.addNode('JobReportTools', jobReportToolsNode)

.addEdge(START, 'WebInfoAgent')

.addConditionalEdges('WebInfoAgent', standardAgentRouter, {...})

.addConditionalEdges('WebInfoTools', standardToolRouter, {...})

.addConditionalEdges('EmailAgent', standardAgentRouter, {...})

.addConditionalEdges('JobReportAgent', standardAgentRouter, {...})

.addConditionalEdges('EmailTools', standardToolRouter, {...})

.addConditionalEdges('JobReportTools', standardToolRouter, {...})

.compile();

return workflow;This code snippet provides a glimpse into the structure of our AI agent workflow. While it may look straightforward, there's significant complexity in the implementation details.

In the following sections, we'll delve deeper into each component of this system, exploring the challenges faced and solutions developed. Whether you're looking to implement a similar system or simply curious about the potential of AI in business processes, the insights shared here should prove valuable.

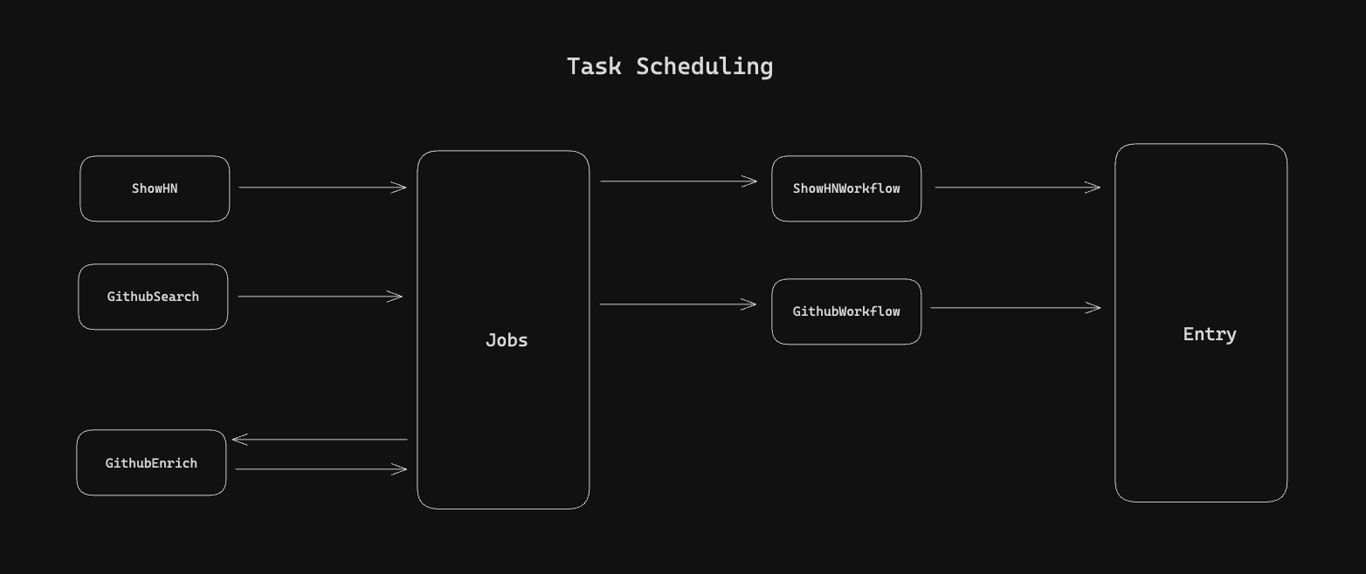

Task Scheduling and Job Creation

The foundation of our AI-driven outreach system lies in efficient task scheduling and job creation. This process involves identifying potential targets and preparing the necessary data for our AI agents to work with.

Sources of Tasks

Our GitHub scraper identifies repositories with active blogs or personal websites. It targets developers who might benefit from our CMS solution.

// In `src/jobSchedulers/github/search.ts`

// Searching for relevant Github Repositories

export const buildSearchQuery = ({

query,

language,

stars,

sort = 'updated',

order = 'desc',

perPage = 100,

page = 1,

}: SearchQueryParams): string => {

let queryString = `q=${encodeURIComponent(query)}`;

if (language) {

queryString += `+language:${encodeURIComponent(language)}`;

}

if (stars) {

queryString += `+stars:>=${stars}`;

}

queryString += `&sort=${sort}&order=${order}&per_page=${perPage}&page=${page}`;

return queryString;

};

export const fetchSearchResults = async (query: SearchQueryParams) => {

const queryString = buildSearchQuery(query);

const { data } = await githubAxios.get<SearchResults>(`/search/repositories?${queryString}`);

return data;

};Data Enrichment Process

Once we have our initial list of targets from Github, we enrich this data to provide our AI agents with more context. This process involves:

Fetching contact info from commit message

Fetching latest commit info (to understand what the developer is up to recently)

Fetching file tree in repository (to understand what framework is used)

// In `src/jobSchedulers/github/enrichImportantValues.ts`

// Enriching initial search results

export const enrichImportantValues = async (importantValues: ImportantValues) => {

const { commitsUrl, owner } = importantValues;

const { data: commits } = await githubAxios.get<GithubCommit[]>(commitsUrl.replace('{/sha}', ''));

const recentCommitInfo = extractInfoFromCommit(commits, owner.login);

const filesInRepository = await fetchTreeInfo(recentCommitInfo.latestTree);

const latestCommitInfo = await fetchCommitCommitDiffInfo(commits[0]);

return {

...importantValues,

recentCommitInfo,

filesInRepository,

latestCommitInfo,

};

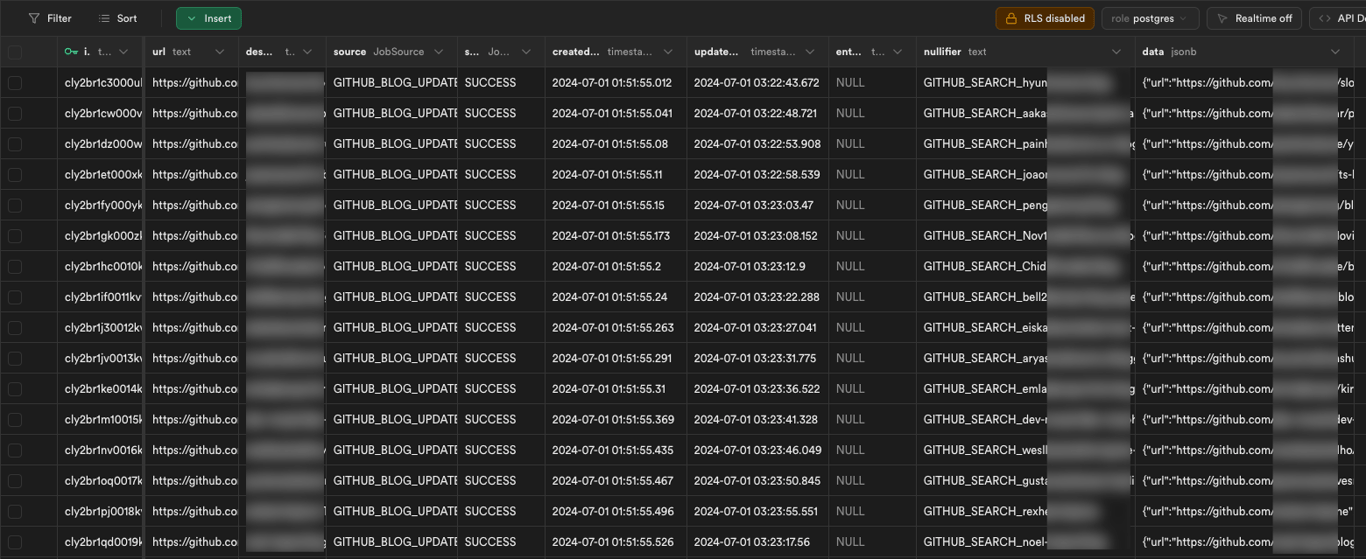

};Job Database

We use a PostgreSQL database hosted on Supabase to store our enriched job data. This allows for efficient querying and management of our outreach tasks.

Tools and Their Functions

Our AI-driven outreach system relies on a set of specialized tools to handle various tasks efficiently. These tools are crucial for job reporting, email sending, and website crawling. Let's dive into each of these tools and their specific functions.

Job Reporting Tool

The job reporting tool is essential for tracking the progress and outcomes of our outreach efforts. It interfaces with our Supabase database to log each step of the process.

Key features:

Logs the start and completion of each job

Records success or failure status

Stores any error messages or notable events

Provides a summary of actions taken by each agent

This tool allows us to maintain a detailed record of each outreach attempt, which is invaluable for analysis and improvement of our system.

// In `src/tools/jobReportTool.ts`

// To file the job status report

export const jobReportTool = new DynamicStructuredTool({

name: 'job-report-tool',

description: `Files a report about the job when it's processed`,

schema: z.object({

website: z.string().describe('The website of the job'),

email: z.string().optional().nullable().describe('The email address that was sent an email'),

emailSubject: z.string().optional().nullable().describe('The subject of the email'),

emailHtml: z.string().optional().nullable().describe('The HTML content of the email'),

utmCampaign: z.string().optional().nullable().describe('The UTM campaign of the email'),

founderName: z.string().optional().nullable().describe('The name of the founder'),

offering: z.string().optional().nullable().describe('The product/service offered by the website'),

targetAudience: z.string().optional().nullable().describe('The target audience of the product/service'),

jobToBeDone: z.string().optional().nullable().describe('The job to be done by the target audience.'),

alternativeEmails: z.string().optional().nullable().describe('The alternative emails of the company'),

socialLinks: z.string().array().optional().nullable().describe('The social links of the website'),

blogUrl: z.string().optional().nullable().describe('The blog URL of the website'),

githubUrl: z.string().optional().nullable().describe('Associated GitHub URL to the website'),

jobId: z.string().optional().nullable().describe('The job ID of the job'),

remarks: z.string().optional().nullable().describe('Additional remarks about the job'),

updatedJobStatus: z.enum(['FAILED', 'SUCCESS']).describe('The updated job status'),

}),

func: async (props) => fileReport(props),

});Emailing Tool

The emailing tool is responsible for sending out the personalized emails crafted by our EmailAgent. It's designed to handle high volumes of emails while maintaining deliverability.

Key features:

Sends emails using SMTP or API-based email services

Tracks email delivery status

// In `src/tools/resendTool.ts`

// To send emails to recipient

export const emailTool = new DynamicStructuredTool({

name: 'email-sending-tool',

description: 'Sends an email to a recipient',

schema: z.object({

to: z.string().array().describe('List of emails to send to'),

subject: z.string().describe('Subject of the email'),

html: z.string().describe('HTML content of the email'),

text: z.string().describe('Text content of the email'),

}),

func: async (props) => sendEmail(props),

});Website Crawling Tool

The website crawling tool is crucial for gathering information about our outreach targets. It's used by the WebInfoAgent to extract relevant data from blogs and personal websites.

Key features:

Fetches and parses HTML content

Allow website to be rendered client-side

Extract text and links

// In `src/tools/websiteCrawlingTool.ts`

export const fetchWebpage = async (url: string, isFullMode: boolean) => {

logInfo(`Running tool: WebsiteCrawlingTool[${isFullMode ? 'FULL-MODE' : 'LITE-MODE'}]`);

logInfo(`Fetching webpage: ${url}`);

try {

const browser = await chromium.launch();

const page = await browser.newPage();

await page.goto(url);

const content = await page.content();

await browser.close();

if (isFullMode) {

return JSON.stringify({ url, content });

}

// Use Cheerio to parse the page content to only get the text content and links

const $ = cheerio.load(content);

$('script, style, iframe').remove();

const textContent = $('body')

.find('*')

.contents()

.filter(function (this: any) {

return this.type === 'text';

})

.map((_, el) => $(el).text().trim())

.get()

.join(' ');

const links = $('a')

.map((_, element) => {

return {

href: $(element).attr('href'), // Get the href attribute

text: $(element).text(), // Get the anchor text

};

})

.get();

return JSON.stringify({ url, textContent, links });

} catch (e) {

logError(e);

return JSON.stringify(e);

}

};These tools form the backbone of our AI-driven outreach system, enabling our agents to gather information, send personalized emails, and track the results effectively. In the next section, we'll explore how we integrate these tools with our AI agents to create a cohesive and efficient workflow.

Agent Types and Their Roles

Our AI-driven outreach system relies on three specialized agent types, each designed to handle specific tasks within the workflow. Let's examine each agent type and its role in detail.

WebInfoAgent

The WebInfoAgent is responsible for gathering and synthesizing information about the outreach target.

Key responsibilities:

Crawl the target's website or blog

Extract relevant information from social media profiles

Analyze content to identify key topics and interests

Here's a simplified implementation of the WebInfoAgent:

// In `src/agents/webInfoAgent.ts`

export const buildWebInfoAgent = async ({ llm, context, compulsoryFields, optionalFields }: WebInfoAgentConfig) => {

const compulsoryFieldText = compulsoryFields.map(({ key, description }) => `${key} - ${description}`).join('\n');

const optionalFieldText = optionalFields.map(({ key, description }) => `${key} - ${description}`).join('\n');

const systemMessage = `You are tasked with finding certain information by traversing a website. ${context}\n\nYou must find the following fields:\n${compulsoryFieldText}\n\n\nDuring your discovery, you should also record relevant information of the following optional fields:\n${optionalFieldText}\n\nYour task is only completed when you've found ALL the compulsory fields. Anytime you've all the compulsory fields you may report that the task is completed, regardless if you have all the optional fields. Terminate when you have visited more than 6 pages. DO NOT navigate to anchored element on the same page (ie /#something). Visit the minimum number of pages to find the required information.`;

return createAgent({

llm,

tools: [websiteCrawlingTool],

systemMessage,

});

};EmailAgent

The EmailAgent crafts personalized emails based on the information gathered by the WebInfoAgent.

Key responsibilities:

Generate personalized email content

Ensure the email tone matches the target's profile

Include relevant details to increase engagement

Here's a basic implementation of the EmailAgent, note how we allow for customised system message to be passed in:

// In `src/agents/emailAgent.ts`

export const buildEmailAgent = async ({ llm, context, instruction, examples }: EmailAgentConfig) => {

const exampleText =

examples && examples.length > 0

? 'Reference emails to send\n' + examples.map((example) => `<example>\n${example}</example>`).join('\n')

: '';

const systemMessage = `You are tasked to craft and send an email using the email-sending-tool tool.\n${context}\n\n${instruction}\n\n${exampleText}`;

return createAgent({

llm,

tools: [resendEmailTool],

systemMessage,

});

};JobReportAgent

The JobReportAgent handles the final stage of the workflow, recording the outcome of the outreach attempt.

Key responsibilities:

Log the result of the email send attempt

Record any feedback or responses received

Update the job status in the database

Here's a simple implementation of the JobReportAgent:

// In `src/agents/jobReportAgent.ts`

export const buildJobReportAgent = async ({ llm }: JobReportAgentConfig) => {

const systemMessage = `Your job is to report on the status of the business development outreach at the end, remember that all the info is about the target website and their audience. Use the conversation provided to fill in the details. The job is considered successful if the email is sent. If the job is not successful, please provide a reason under additional remarks. Also file relevant information about the company so that we can track the progress in a CRM after. REMEMBER IF THERE IS A MISSION_FAIL FROM THE PREVIOUS AGENT, PLEASE FILE A REPORT WITH 'FAILED' STATUS.`;

return createAgent({

llm,

tools: [jobReportTool],

systemMessage,

});

};Configuring the Agent Workflow

The agent workflow is configured using LangGraph, which allows us to define the structure and logic of our multi-agent system.

Graph Structure

The graph structure defines how our agents interact and the flow of information between them.

// in `src/agentWorkflow/githubBlogWorkflowLogic.ts:154`

const workflow = new StateGraph({

channels: agentStateChannels,

})

.addNode('WebInfoAgent', webInfoAgentNode)

.addNode('WebInfoTools', webInfoToolNode)

.addNode('EmailAgent', emailAgentNode)

.addNode('EmailTools', emailToolNode)

.addNode('JobReportAgent', jobReportAgentNode)

.addNode('JobReportTools', jobReportToolsNode)

.addEdge(START, 'WebInfoAgent')

.addConditionalEdges('WebInfoAgent', standardAgentRouter, {...})

.addConditionalEdges('WebInfoTools', standardToolRouter, {...})

.addConditionalEdges('EmailAgent', standardAgentRouter, {...})

.addConditionalEdges('JobReportAgent', standardAgentRouter, {...})

.addConditionalEdges('EmailTools', standardToolRouter, {...})

.addConditionalEdges('JobReportTools', standardToolRouter, {...})

.compile();

return workflow;Node Initialization

Each node in the graph represents an agent. When initializing nodes, we pass the necessary language model (LLM) and tools to each agent.

// Example initializing EmailAgent

const emailAgentNode = await buildEmailAgentNode({

llm: gpt4o,

context: `My name is Raymond and I'm a founder of a startup called wisp. wisp is a headless cms made to easily add blogs to any existing website. We focus on creating a delightful editorial experience similar to that of medium.com or notion. Developers can now update their site without pushing code changes, avoid having assets like images collocating with code. They can also stop messing around with markdown. Your goal is craft AND SEND an email to the developer based on the information you found to address pain points (if any) and how our product can help them.`,

instruction: `Write a personalized email to the developer of the blog/repo, making use of information found in earlier steps. It should be a short email that says hi, talk about their pain point (if any) and offer our blogging solution if it's appropriate. You offer value in a way that makes them feel like they have readership and feel seen. Link to specific blog post they've written whenever possible, and optionally add your thoughts. Avoid generic comments like "super impressive stuffs" but be specific about what you are commenting on. Find an appropriate text to link them to wisp's website (https://www.wisp.blog/), with utm parameters (utm_source=gh_blog, utm_medium=email, utm_campaign to use developer username). Overall the email should be snappy and be 300 character or less to make it easy to read. Use a conversational tone. For the subject, make use of a curiosity gap (an interesting question, statement or observation you've made on their site or materials) to make them want to read it, don't use marketing terms like boost, unlock, etc. Also, use sentence case to make the subject more conversational / intriguing. Remember to avoid selling our features too hard, just mention them in passing remarks. IMPORTANT: If you notice that the user is not english native (observe commit message and blog content), write the email in their language. Mark the task as finished only when the email is sent.`,

examples: example_emails,

});Conditional Edges and Routing Logic

We can add conditional logic to our workflow to handle different scenarios, such as failures or specific conditions.

// Defining routes for WebInfoAgent

const standardAgentRouter = <T extends BaseStateTemplate>(state: T) => {

const messages = state.messages;

const lastMessage = messages[messages.length - 1];

if (isAIMessageWithToolCalls(lastMessage)) {

return 'call_tool';

}

if (typeof lastMessage.content === 'string' && lastMessage.content.includes(PREFIX_TASK_FINISHED)) {

return 'task_finished';

}

return 'mission_failed';

};

...

const workflow = new StateGraph({

channels: agentStateChannels,

})

.addNode('WebInfoAgent', webInfoAgentNode)

.addEdge(START, 'WebInfoAgent')

.addConditionalEdges('WebInfoAgent', standardAgentRouter, {

call_tool: 'WebInfoTools',

task_finished: 'EmailAgent',

mission_failed: 'JobReportAgent',

})

...This configuration allows our workflow to adapt to different outcomes, ensuring that each job is processed appropriately regardless of success or failure at any stage.

By carefully designing our agents and configuring the workflow, we create a flexible and robust system capable of handling complex outreach tasks. In the next section, we'll explore how we monitor and optimize this system to ensure peak performance.

Challenges and Lessons Learned

Implementing this AI-driven outreach system wasn't without its hurdles. The biggest challenge was getting the agents to work together smoothly. At first, our WebInfoAgent struggled with inconsistent data extraction, leading to subpar email content and excessive token consumption. I solved this by refining our web scraping tools to return only text content and links and improving the agent's prompts.

Another issue was the EmailAgent's tendency to generate overly generic messages. I tackled this by expanding our prompt to include more specific instructions and examples of personalized, engaging emails. The learning curve was steep, but each obstacle taught us valuable lessons about AI system design and natural language processing.

Looking ahead to version 2, I'm focusing on scalability. I plan to implement a more robust job queue system and explore ways to parallelize agent tasks. I'm also considering adding a learning component that allows the system to improve its performance based on feedback and success rates.

Conclusion

This project demonstrates the potential of AI-driven systems in automating complex business processes. By combining web scraping, natural language processing, and a multi-agent workflow, we've created a tool that can significantly streamline outreach efforts.

Key takeaways:

AI agents can work together to handle complex, multi-step tasks

Careful prompt engineering is crucial for generating high-quality, personalized content

Monitoring and continuous improvement are essential for maintaining system effectiveness

I've open-sourced the code for this project, and invite developers to explore, fork, and build upon the work. Whether you're looking to implement a similar system or just curious about AI applications in business, there's plenty to learn from this codebase.

Check out the repository, dive into the code, and see how you can adapt it to your own use cases. The future of AI-driven business processes is here, and it's up to us as developers to shape it.